Part 1: History and Background

February 23, 2017 / by / In

Deep Learning 101 - Part 1: History and Background tl;dr: The first in a multipart series on getting started with deep learning. In this part we will cover the history of deep learning to figure out how we got here, plus some tips and tricks to stay current.The Deep Learning 101 series is a companion piece to a talk given as part of the Department of Biomedical Informatics @ Harvard Medical School ‘Open Insights’ series. Slides for the talk are available here and a recording is also available on youtube

Other Posts in this SeriesEach post in this series is a collection of explanations, references and pointers meant to help someone new to the field quickly bootstrap their knowledge of key events, people, and terms in deep learning. In the same way that neural nets use a distributed representation to process data, reference materials for deep learning are scattered across the far flung corners of the internet and embedded in the dark ether of social media. The hope is that coalescing at least some of these materials into a central location will make it easier for new comers to start their own walk over this knowledge graph. This collection is intentionally peppered with trivia and articles from the popular press that are relevant to deep learning to keep things interesting and to provide context.

These posts are also inspired by the Matt Might Mantra of blogging:

The secret to low-cost academic blogging is to make blogging a natural byproduct of all the things that academics already do.

If Matt Might gives a suggestion, it’s probably a good idea to follow it.

I hope to keep this updated and fresh as new research is produced. Hopefully this can remain a point of reference in the future. So like Kanye’s Life of Pablo, the posts in this series will continue to change and evolve as new stuff happens. If you see something missing that you think should be added, leave a comment below or shoot me a message on twitter.

IntroductionDeep learning, over the past 5 years or so, has gone from a somewhat niche field comprised of a cloistered group of researchers to being so mainstream that even that girl from Twilight has published a deep learning paper. The swift rise and apparent dominance of deep learning over traditional machine learning methods on a variety of tasks has been a最新变态传奇世界页游ishing to witness, and at times difficult to explain. There is a fascinating history that goes back to the 1940s full of ups and downs, twists and turns, friends and rivals, and successes and failures. In a story arc worthy of a 90s movie, an idea that was once sort of an ugly duckling has blossomed to become the belle of the ball.

Consequently, interest in deep learning has sky-rocketed, with constant coverage in the popular media. Deep learning research now routinely appears in top journals like Science, Nature, Nature Methods and JAMA just to name a few. Deep learning has conquered Go, learned to drive a car, diagnosed skin cancer and autism, became a master art forger, and can even hallucinate photorealistic pictures.

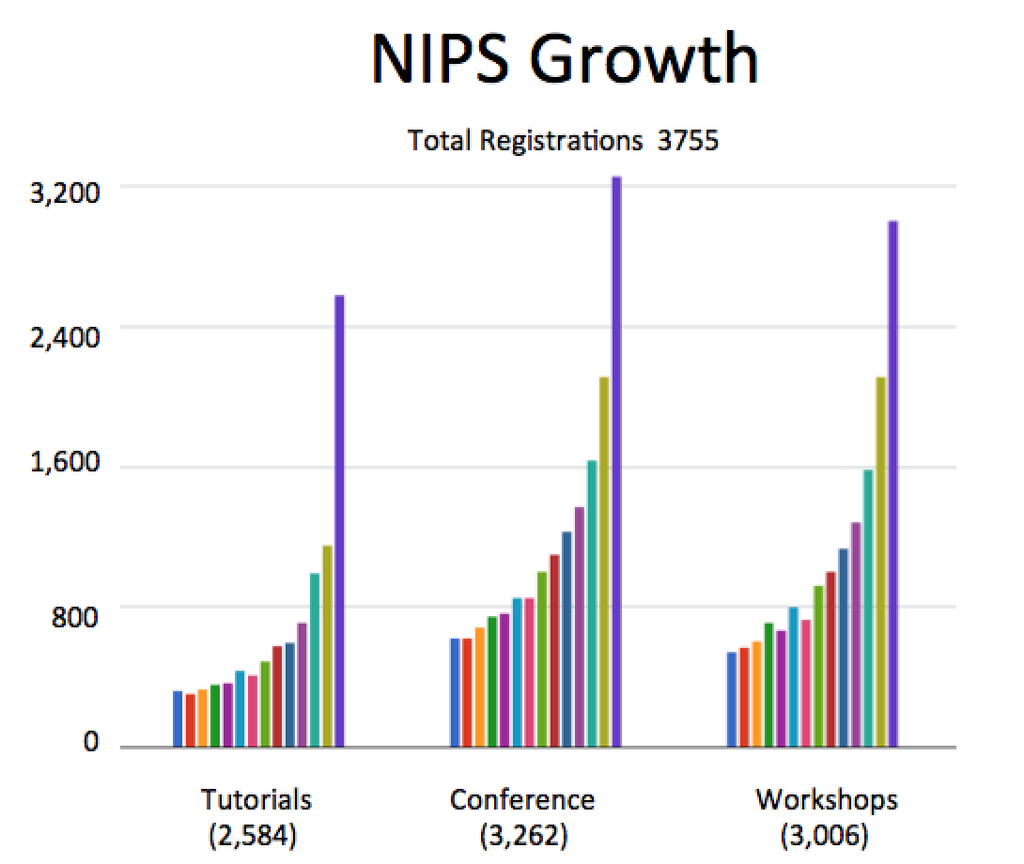

A good surrogate for interest in deep learning is attendance at the Annual Conference on Neural Information Processing Systems (NIPS). NIPS is the main conference for deep learning research and has historically been where a lot of the new methodological research get published. Interest in the conference has surged in the last 5 years:

The chart only goes to 2015 and this year’s NIPS had over 6,000 attendees, so it doesn’t look like interest is close to leveling off anytime soon. Confirmation that we were entering a period of serious hype occurred during NIPS 2013 when Mark Zuckerberg made a surprise visit to recruit deep learning talent, and ended up convincing Yann Lecun to be the director of the Facbook AI lab.

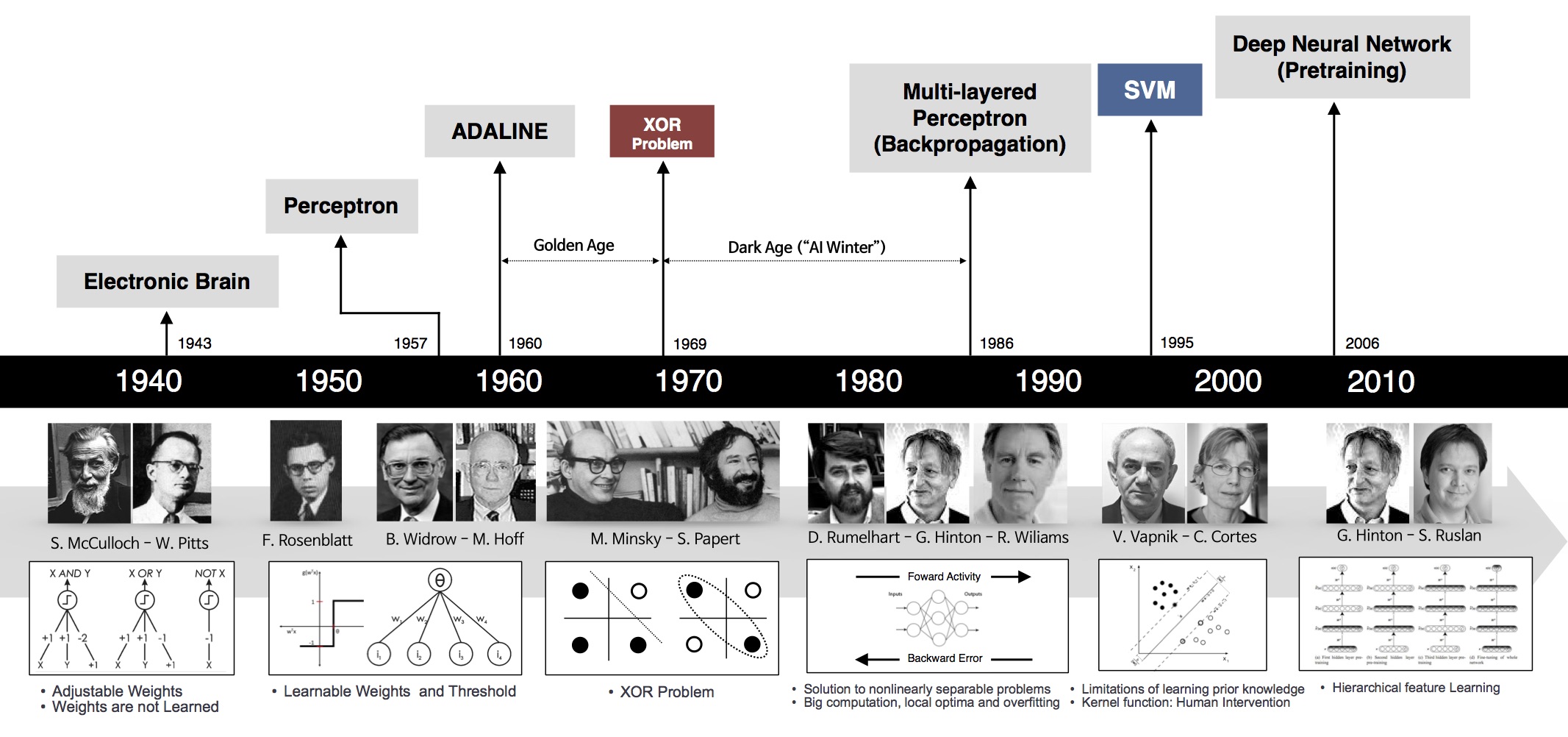

What does all this hype mean? Why is this happening now? At a high-level, what we’ve witnessed is the synergistic combination of insights from optimization, traditional machine learning, software engineering, integrated circuit design, and serious sweat equity from a dedicated group of researchers, postdocs, and grad students. Though the main ideas behind deep learning have been in place for decades, it wasn’t until data sets became large enough and computers got fast enough that their true power could be revealed. With that said, it’s worth walking through the history of neural nets and deep learning to see how we got here.

Mile最新变态传奇世界页游es in the Development of Neural Networks

The idea of creating a ‘thinking’ machine is at least as old as modern computing, if not even older. Alan Turing in his seminal paper ‘Computing Machinery and Intelligence’ laid out several criteria to asses whether a machine could be said be intelligent, which has since become known as the ‘Turing test’. For some great explorations on variants of the Turing test, check-out Brain Christian’s book detailing his adventures with the Loebner Prize entitled The Most Human Human or check the amazing, dramatized version in Ex-machina.

相关阅读

- 多学科团队勇闯“禁区” 安医大一附院成功救治103岁肠梗阻患者

- Part 1: History and Background

- Part 2: Multilayer Perceptrons

- Segmenting the Brachial Plexus with Deep Learning

- Pricing and the Orphan Drug Act: The Curious Case of 17P

- 曾是世界武术冠军,甄子丹都打不过他,现在在国外红得发紫

- 曾是世界武术冠军,甄子丹都打不过他,如今在国外红得发紫

- 工业主板路线图揭露玄机:Intel四款处理器发布计划曝光!

- 中变网页传奇世界阴谋达成全流程

最新文章

- 多学科团队勇闯“禁区” 安医大一附院成功救治103岁肠梗阻患者

- Part 1: History and Background

- Part 2: Multilayer Perceptrons

- Segmenting the Brachial Plexus with Deep Learning

- Pricing and the Orphan Drug Act: The Curious Case of 17P

- 曾是世界武术冠军,甄子丹都打不过他,现在在国外红得发紫

- 曾是世界武术冠军,甄子丹都打不过他,如今在国外红得发紫

- 工业主板路线图揭露玄机:Intel四款处理器发布计划曝光!

- 中变网页传奇世界阴谋达成全流程

- 开播冲上9.2分,这神剧又赢麻了